Tired of waiting for your 'engineered prompt' to have Google or OpenAI spit out a "SteamPunk" image to your liking?

"Sign up here" the GenAIaaS Provider pleads, for 'just' US $79.00/month!

Why so expensive? Because these genAI Expert Providers pay Google or OpenAI 50% of what they earn. Ouch.

Yeah sure we all have a discretionary US $79.00 a month available,(not)

so we can piss around making pictures of a Steam Punk 'House Fly',

in between Zoom calls,

while we pretend to work in our pyjamas.

My Advances in User Productivity on Display, the Steam Punk Way.

Note to Reader- This is a really long 'techie' note to self. ;)

If you are short on time or attention span, please move on.

If you like genAI Image generation, then there is lots of GenAI Generated Eye Candy to look at in this post.

If you can't stand techie talk, please also move on. :)

If you are trying to better understand GenAI under the hood and how crypto might play into this giant market, then

READ ON!

The GenAI 'Token Speed' King of GenAI: Google- Look Mah, No Utility Token Support!"

So Google is king for a month or so, of genAI image generation processing a 'gazillion tokens per second'.

Despite all pictures coming up 'black' earlier this week, even when you ask for a picture of Elon Musk or a picture of a traditional Irish Couple. WTF. ('Heads should roll' at Google, but they wont in what is the epicenter of woke_land)

Whoop - t - do. Yawn.

What awaits Google, imo before the end of the year,

Pandora's Box Opens to Release a hoard of gremlins

now that the genAI Pandora's JAR lid is fully removed,

Google's early AI Gemini 'woke' induced market share will decline rapidly, enduring a thousand competing CoPilot genAI expert grabs of engineered prompt market share, courtesy MS Azure CoPilot AI Channel Program

(not using Google genAI services),

The above Copilot Gen AI developer crowd soon to also partially migrate in mass and be deployed over cheaper pciegen5 CXL virtualized GPU and shared memory infrastructure, using different tool sets, once MS Azure raises their GPUaaS pricing.

Why I say this is MS will be compelled to cover their giant AMD IP and 'fabricated to spec' GPU buys, so they will raise the GPUaaS fees to protect their shareholder EPS and Stock prices so neither don't fall in the toilet due to lack of return on this massive MS investment, because developers are slow and users are even slower to pick up on paying for GenAI developer value add (at 79.95/month), thus burning up their VC cash runway at record speeds.

The VCs are ready for arbitrage, so in 2025 expect a lot of sell-offs both IP and merger in form,

where these VCs have already priced in 9 out of 10 of these GenAI startups renting GPUaaaS time from Google or Amazon to run their expert LLMs businesses into failure mode.

DeppAI.org Cyberpunk genAI Expert depiction of:

"Google depicted as an Alien Monstrosity"

Google, Crushing every competitor in their path with Large Nvidia Purchase Orders to power their GPUaaS

It's the 'razed earth' way Google (and Amazon) take GenAI market share these days by cornering supply of NVIDIA H100 GPUs( effectively doing so for BIG Profit, 5 years later).

Clearly Google and Amazon are currently sating the GenAIaaS Intelligent Assistant 'MSP Retail' Service 'thirst for GPUaaS" horsepower while sticking those same MSPs and their end user subscribers with insanely high rental and subscription prices per month.

The only certainty of outcome for these MSPs in the GenAI startup game is, they will be sure to burn up huge gobs of stupid VC money funding their malformed GenAI Assistant Retailer Service go to market models in the process, ensuring most of these early GenAI startups will earn an early 'VC arbitrage demise', more often than not.

For those GenAI MSP startups operating their business model inside what is a 'head nodding' monopoly, or in this case a three company oligopoly, it's not a recipe for long term startup success, so my advice to these GenAI service wannabes? Better to make your own merger moves with similar tech offers, to garner a large enough subscriber base to stay alive, or you will have the VCs do it for you, a year or so later, with big founder casualties.

For now though, in MS Azure CoPilot, which is somewhat late to the GPUaaS party, those same GenAI Vertical Expert Startups may actually have a reasonable priced GPUaaS supply chain 'friend'.

Azure, in a bid to become GPUaaS competitive, now virtually designs and virtually builds custom GPU SoC system on chip GPUs using AMD GPU IP, produced in physical form by AMD's chip foundry services to Azure's 'exacting' specifications.

So AMD finally has a 'toe hold' in the GPUaaS space as a GPU tech hardware supplier, via MS Azure, to effectively intrude into the Nvidia dominated Cuda Koolaid genAI and LLM market.

Goody for AMD, Goody for MS Azure.

Which leaves most of us asking the question:

Where does this leave Intel in the race for GPU powered genAI Supremacy?

'SteamPunked' Intel Building in San Jose CA courtesy of GAB AI 'SteamPunk' Expert

The Next genAI wave?: GPUaaS Wholesale Cost Redux of 10X needed for MSPs to Thrive..,

Solution: Intel GPUs + CXL RAM powered GPUaaS serving 'Mixture of Expert' GenAI MSPs? maybe..,

No 'Cuda Koolaid', Just be sure to make Payment Per Prompt in Crypto please..,

Imo, by 2025,

expect the rapid emergence of thousands of specialized LLM 'Expert' taxonomies to have taken root,

each designed to serve vertical markets better with Vertically trained GenAI assistants, speaking the language of those vertical markets much better than we have today.

ie- LLMS for each of Tooth Implant Engineering vs. say Greenhouse Growers in Canada/Siberia,

which will make these Google LLM tokens processed per second speed gains and tokens per second races

rather a mute issue,

In the short terms, by end of 2024 expect Google to be eclipsed by Meta and others.

Also consider this fact, Google has only effectively crawled by their own estimates, 3% of the information on the entire web, the source of information being deposited into Big Data Lakehouses (Snowflake and DataBricks et al) on which LLMs are trained by their Machine Learning subsystems.

So plenty of opportunity out there to get vertical assistant right and grab market share, especially in different languages.

Graphics Processor Unit 3D Art Generator DeepAI_org "Truly pathetic?" yup..,

GPUs + Expensive Dedicated 'One Size fits all' ADM Memory = VERY Expensive GPUaaS Rental Rates

= VERY EXPENSIVE US $79.00 GenAI Expert End User Subscription Prices per Month

For Example, depending on how complicated the profession AMD GPU's Market leading 115GB of AMD Memory might be too much, too little, but it will always be way too expensive for most GenAI Assistant MSP retail buyers of Wholesale GPUaaS, unless they charge their own subscribers US$79.00 per month per Vertical Expert to keep their small subscriber count businesses afloat.

Intel though, has a secret playing card, CXL, Computer Express Link.

CXL 1.0 is on every PCIE gen 3.0 motherboard which is your PC's motherboard tech, now being upgraded from either CXL 1.0 pciegen3 or CXL 2.0 pciegen4 to CXL2.0/3.0 capable PCIe Gen5 motherboards found in 'cutting edge' DC computer CPUs now being shipped by Intel in Q1 2024 in volume as part of Dell and HPE chassis products, SuperMicro too, etc..,

Soon I expect (via Intel) we will see GPUs speak CXL protocols so their cores/threads and memories also become dynamically composable and sized to fit each job that comes their way.

CXL 2.0 is making big inroads in the HPC market segment allowing individual Terabyte SSD drives to be carved up among up to 16 different composable volumes owned by different jobs, as the storage used today as the modern DC is already adopting CXL underneath NVME 1.0 zone file system standards to make NVME Terabyte Flash storage drives shareable via CXL's Logical Address volume composability.

That is to say, every time a workload is run in the DC, the operator in theory has the option to aggregate the resources needed dynamically via CXL to run the job, and then release the compute, ram, bandwidth and storage so those newly unassigned resources can be re-configured dynamically and re-assigned quikcly for use to competing, follow on jobs.

CXL is a game changing technology invented by RAMBUS, now owned by Intel, where the CXL standards, SDK and learning is organized and governed under the computerexpresslink.org consortium's guidance where over 900 members guide where CXL is going. Those CXL members being the Biggest Names of Silicon Valley and more.

Gab AI's engineered prompt Steam Punk Expert result for "Tokenized Car Wash Payment Panel"

Sort of Cool, non?

Vertical Market GenAI Tutor or Research Assistant Experts:

Tokenized, Pay for Complexity and Time, Per Engineered Prompt

Composable GenAI Custom Priced Prompts, Per Prompt or Month is a GenAI Profitability KSF

Given the compute needs of the 'specialized' GenAI experts are smaller in nature,

meaning these 'experts' need less memory overall per prompt if the LLM is specialized,

then its a given, having multiple GenAI Assistant MSPs having their GPU jobs share cheaper memory,

that is, sharing the same GPUaaS DC Operator infrastructure among several MSPs

is going to be cash flow positive enhancing mutually beneficial arrangement, which promotes

competition and that keeps downward pressure on "GenAI Prices Per Prompt".

CXL's ability to dynamically share resources, means Multiple Competing GenAI MSPs sharing the use of the same GPUaaS Infrastructure offering, means their individual GenAI Expert service offering handling custom vertical market engineered prompts could be offered at:

US $6.99 per month, per expert,

a price most of us tech weenies fueling the current GenAI rage can actually afford

and in theory, make use of, in our day to day business, using such Expert services as an "on Demand Tutor', when we aren't too busy getting 'steampunk' creative .

DeepAI.org's result for "GenAI Vertical Expert Services Priced Per Engineered Prompt" huh?

Priced per Engineered Prompt is Even better based on complexity and time needed to generate a Result

(unique token value per prompt means calculating compute time in advance before committing to a prompt price)

Whooah is Intel?

Intel on the the other hand,

having completely 'missed the boat' in the first wave of GPUaaS market growth powering GenAI offers,

is indeed scheming their way back into this ballooning GPUaaS market space,

a GPUaaS market currently 'growth regulated' by Nvidia's lack of desire to erode their gross margins by filling the demand's of their 'Cuda Coolaid' drunk developers of GenAI Assistants with just enough GPUaaS horsepower.

These 'Cudafiles' are sadly (for them) now totally locked in to Nvidia's monopolistic practices,

while the US DoJ looks the other way,

despite Nvidia's ability to control the market in a monopolistic "Anti-Trust', doing so, for the past 8 years.

What will Intel Do? :

The GPUaaS Market Players aren't waiting around for an answer

So is Intel kissing USA DoJ 'Dept. of Just us' butt to get this Nvidia GPUaaS de facto monopoly dissolved or,

is Intel doing something else, to find a big 'market share grabbing' way into the GPUaaS market opportunity they missed first time around?

For me imo Intel is doing that 'something else'.

What exactly that 'something else' Intel is up to,

I am sure, has 'now long past acquisition phase' of RAMBUS written all over it.

Make no mistake, in the Data Center where GPUaaS lives, Nvidia is King these days,

serving their current Cuda SDK Koolaid to developers pumpin' out different variations of pytorch manifested LLMs,

Here is Brave's AI Assisted Code Summarizer Definition and their sources:

based on my 'engineered' prompt "What is Nvidia's Cuda programmin language?" (corrected the spelling too. ;) )

"what is nvidia's cuda programming language?

NVIDIA’s CUDA programming language is not exactly a separate language, but rather an extension of popular languages like C, C++, Fortran, Python, and MATLAB. CUDA allows developers to program GPUs for general-purpose processing by adding a few basic keywords to express parallelism.

Here’s a simple CUDA code example:

// Includes and definitions

#include <iostream>

#include <cuda_runtime.h>

#include <device_launch_parameters.h>

// CUDA kernel function

__global__ void add(int n, float *x, float *y)

{

int index = threadIdx.x;

x[index] = y[index] + x[index];

}

int main()

{

// Host inputs

int N = 10;

float h_x[N], h_y[N];

// Initialize host input data

for (int i = 0; i < N; i++)

{

h_x[i] = i * 1.0f;

h_y[i] = i * 2.0f;

}

// Allocate device memory

float *d_x, *d_y;

cudaMalloc(&d_x, N * sizeof(float));

cudaMalloc(&d_y, N * sizeof(float));

// Copy input data to device memory

cudaMemcpy(d_x, h_x, N * sizeof(float), cudaMemcpyHostToDevice);

cudaMemcpy(d_y, h_y, N * sizeof(float), cudaMemcpyHostToDevice);

// Launch kernel on 10 threads, one for each array element

add<<<1, N>>>(N, d_x, d_y);

// Copy output data back to host memory

cudaMemcpy(h_x, d_x, N * sizeof(float), cudaMemcpyDeviceToHost);

// Print results

for (int i = 0; i < N; i++)

{

std::cout << "Sum: " << h_x[i] << std::endl;

}

// Free device memory

cudaFree(d_x);

cudaFree(d_y);

return 0;

}

In this example, the CUDA kernel function add is defined and then launched on the GPU with 10 threads. Each thread adds the corresponding elements of arrays x and y together and stores the result in array x. The results are then copied back to the host memory and printed."

Context Nvidia CUDA Zone - Library of Resources | NVIDIA Developer

Cherry Servers A Complete Introduction to GPU P

where these 'Cudified' Large Language Models are fed and tuned repetitively by Machine Learning sub routines used to power the improved 'intelligence' of these MSP developed genAI Assistant front ends to spit out more accurate answers to your "engineered' command prompt, where LLM value is spun up and delivered to you as A GenAI 'EXPERT' Assistant 'SERVICE' and Image Generating service offerings, largely labelled as vertical market expert 'Tutor' and 'Research Assistant' monthly subscriber offers to their would be and GenAI service jumping 'fickle' end users.

DeepAI.org 'CockPit' CyberPunk Expert Image Generation Result

The rise of MS Azure AI CoPilot (and AMD GPU Sales too..,)

Imo, expect to see MS Azure CoPilot grab more of your 'GenAI prompt engineering' attention and time

via various emerging channel offers from different 'MS tech weenie powered' companies large and small.

MS Azure is running their GPUaaS wholesale backends servicing these MS Azure AI CoPilot Partners on White Label OEMed AMD GPU SoC IPs, despite whatever spin they might give you about it being their own SoC design.

Azure's AI channel developed CoPilot offerings are now flooding the market as we speak, in the form of useful tools in SDKs and, starting this summer, many of their captured MS weenie developer 'channel base' will be offering Freemium, Wait for it, genAI Expert services powered by CoPilot. Expect each freemium job request though to have predictably long long queueing and execution times, as MS Azure CoPilot partners build up their funnel of sometimes paying engineered prompt jobs locked down via subscriptions. MS Azure AI GPUaaS will be servicing each CoPilot channel best they can as they order more AMDGPUs to build up their token processing capacity fast, where the freemium engineered prompt queue will always be serviced last, creating wait time beyond what even a UK citizen could tolerate (as the UK types are the self proclaimed champions of line queuing. ;) )

DeepAI.org "The Emperor 'Intelpatine' Strikes Back" Cyberpunk GenAI Expert Result

Intel I expect, will strike back: Grabbing new market share in the GPUaaS Segment

through their biggest volume buyers, who have also been left behind, namely Dell and HPE.

Ok, Intel did not get it right the first time, and in many cases in many different markets they never got it right the second time.

So why will it be any different?

It's simple, HPE and Dell will not be left out this time around and,

will be buying both AMD and Intel GPUs to construct GPU Shelf Systems which they hope will help them get their share of this growing GPUaaS system hardware market powered by big corporate and consumer interest in GenAI,

which is right now, even with Nvidia, seeing this GPUaaS market opportunity

spilling rapidly into the cheaper 'power and space' colocation facility DC Operator market,

where selling generic Compute and Storage for Web Hosting has been their bread and butter , for both HPE and Dell until now.

So what has changed? These same DC Operators smell and opportunity called GPUaaS and its with Nvidia this time around and not Intel or AMD.

GAB AI: A classic GenAI MSP Wholesale Buyer of GPUaaS Services? Not.

GAB.AI (Andrew Torba) claim to have built their own GPUaaS IT Infrastructure and GenAI offers, I gues powered by their own Machine Learning against repositories of data they have built up in a GAB Lakehouse fed by GAB web crawlers.

Still I need to pay GAB.AI US $79.00 per month to 'eliminate processing wait' time? not.

It's interesting to note those GenAI Assistant icons lurking in a browser sidebar window near your cursor, or available a AI domain websites (Like GAB.AI) are always ready to take your engineered prompt (question or description in the case of image generation), where the eye candy attraction these days is generating silly images.

Really these systems could and can do much more implemented as a Tutor or Research Assistant beyond working their LLM magic to spit out the image of your desires into a 70% likeness of what you thought it would be, take the mage below received from GAB AI from by engineered prompt "Steampunk Red Dapple Dachshund" . (Yes we actually serve one located in the centre of our household universe, hourly it seems.)

More Remote Worker Productivity on Display, my first shot via GAB AI, during a quiet time, only 20 seconds

Ok until GAB.AI tells you to wait, forever, for your 'engineered prompt' job to complete,

then GAB.AI's Web Interface prompt entry window implicitly suggest you hit the 'tired of waiting' button and

sign-up for US $79.00/month for unlimited access to all their 'experts'. not.

To be fair to GAB.AI and Andrew Torba their CEO (Former Y-Combinator Startup geek) do have some generic expert AI assisted expert offers, some branded under well known historical names, some of those brands being quite controversial.

For instance, one can ask GAB AI's Putin, Patton and even Sleepy Joe 'experts' questions and get some times quality useful informational responses. IF the engineered prompt get's too personal as is the case with Sleepy Joe asking him about his sniffing young girl habits, the response is pretty hilarious. ;)

Here is one from Gab AI's Patton branded expert:

for this engineered prompt: "Would you allow gay men into the army"

Not surprisingly, given the times back then: Here is GAB AI's Patton Answer:

"I would not allow gay men into the army. I believe in traditional values and the sanctity of marriage. Homosexuality is a perversion and has no place in the military."

So the GAB.AI honest response based on the predilections of the day (properly researched) is appreciated, which is what any good researcher would want to see. from a good genAI expert App mounted on their Smartphone (for US $6.99/month max.)

DeepAI.org "Pay Per Prompt GenAI Services" Prompt Result

Their 'Cyberpunk' Expert engineered prompt image result "not even close'

What raw data sources did they point their ML training at to create this crud?

Who knows, you don't get a say in the matter to 'curate' the data sources.

What will you Pay per Month or Per Engineered Prompt for:

Quality (User Permissioned Sources) of GenAI Tutor or Research Assistant Results?

For me the answer is CDN $9.99 (US $6.99) as I would, after testing several options,

drop one paid App of my iPhone or Android powered Smartphone to make room for another,

because that is all I can afford monthly,

beyond the crazy monthly fees already paid for the Internet, TV and mobile phone services, plus the fact my home rent and power bill have risen 2X in the last 10 years, while I am still being paid/charging out what was agreed with customers back in 2017.

Getting to US $6.99/month is where Cryptocurrency, Blockchains, Inter-chains and Utility tokens come into play.

Implementing Cryptosphere tools in a way that makes GPUaaS IT infrastructure less costly and more efficiently used by renting MSPs will imo, make GenAI Vertical Expert, Monthly Tutor or Research Assistant low affordable price points possible @ scale,.

The key to integrating these tools will be to focus their design and integration to enable several MSPs to optimally share the DC operator's Colocation facility GPUaaS infrastructure such, that the DC operator has 60% active pay for use of their infrastructure generating a cash flow 2X faster than fixed price rental of cages and equipment monthly.

Setting up Multithreaded Smart Contracts on SWAY using the FuelVM mounted on the Cosmos HUB Interchain which communicates to native ATOM Blockchains and ERC20 Bridges is likely one way to go to meet the many cryptos supported @ scale requirement,

where most times, the equipment these DC Operators buy and attempt to rent actually sits 66% idle most of the time, over the course of the year, while the same Operators 'web beg' their clients (both Enterprise and MSPs) to rent and make use of old equipment (Equinix is classic case in point) at some sort super high monthly price, with cheaper discounts available, ID the MSP of Enterprise lock themselves into a one year or three contract renting their rapidly aging 'MetalaaS' enabled equipment with CXL 1.0 only capable PCIEgen3 motherboards some of it bought at auction and redeployed to host your web site. ;) (WTF)

GAB AI default Image Generator 'GreenHouse Expert' , 'engineered' prompt result

Not bad, for a two word engineered prompt result. My Score? 90%.

TOKENIZED GPUaaS powering your Monthly Vertical Expert Tutor or Research Assistant Buy:

The Cryptosphere way

First my thesis is mainly about how to lower costs of GenAI Vertical experts to levels affordable 'Per Prompt' or monthly where these services are made available to everyone (We the People) wishing to rent a GenAI expert with their ERC20 based crypto currency (for (US$6.99/month or for 'Pennies per Prompt')

Ideally, running such 'experts' on cheap'er' Intel or AMD CPUS making use of shared memory, would be a better way to go, BUT, matrix multiplication , the heart of how AI works today in most instances forces everyone into re-purposed Graphics card Hardware which are RISC processors good at executing simple non-recursive 'uni-directionally" programmed instructions, these days written in python inside of tensorflow and pytorch development frameworks.

AI on CPUS and Shared RAM? Maybe AIGO has it right?

In there any AI development platform and solution that does make use of general purpose CPUs. Yup.

Check this Peter Voss stuff out AGI Artificial General Intelligence, which has been around for awhile, enough for the VCs to extract this guy out of South Africa and move him to CA in 2002 when he was 55 (8 yrs missing in his CV) where he is still shilling AGI hard even at his advanced age of 75...

https://www.linkedin.com/in/vosspeter/

https://aigo.ai/

cont'd from above,

I am assuming all down loads of GenAI experts are from existing multiple Desktop and Smartphone 'App Stores', meaning these smartphone and desktop GenAI Assistants will be downloaded from these existing services @ scale by the masses, just as apps are downloaded today.

Second, in order to encourage such wide spread use of such low cost GenAI Vertical Expert prompt results,

the Gen A(eh?), Z, Y flocks will want to use their ERC20 based cryptocurrencies they have just started accumulating,

some of this ERC20 crypto value has likely been earned recently by gamers acquiring and selling assets won in gaming, where paying with crypto to pay for objects is the game trend just now starting to take hold and re-power the massive commercial opportunities and growth of many big 'ERC20 crypto adapted' multiplayer online gaming services , now operating on both desktops and smartphones servicing these generations.

Third, in order to handle the gigantic predicted flow of payments in crypto and fiat for gaming and new GenAI assistant services (Yes there will be expert Tutors for Games as well),

New, efficient GPUaaS wholesalers will emerge, making use of CXL composably automate crypto currency payments per Prompt job, converting such payments into GPUaaS consuming utility tokens per 'engineered prompt",

Where GPUaaS agents controlling each resources in the DC will

setup a private, virtualization and automated GPUaaS connections between GPUs, Shared Memory, Shared Storage and Shared Fabric Bandwidth which

dynamically composes the shared use of Compute, Memory and even storage,

both temporary and permanent to serve each differently sized Vertical LLM prompt job.

Results from GenAI prompts will then be stored in FLASH to both answer those prompts for later subscriber retrieval and also to be read by Machine Learning, so the Vertical GenAI Expert MSP renting the GPUaaS composable resources can continually learn and update the LLM by executing these repetitive inference tuning jobs on GPUaaS which make their Vertical Experts competitive better, attracting even more subscribers.

That said, these GenAI LLM tuning jobs take weeks today and,

are also executed against data subsets in modular 'piece meal' form, so GenAI Vertical Expert MSPs can get ahead of subscribers and their growing vertical information interests,

changes in interest which are often triggered by events both newsworthy and also academic

(pre Test Tutor Assisted studying, research support for paper submissions any one),

changes which trigger the GENAI MSP offering the vertical expert service to:

have the related machine learning component of quickly 're-learn' some parts of the ever changing 'datalake' of unstructured BIG 'Vertical' data residing in their rented TeraData, Snowflake or Databricks 'lakehouse' data infrastructure service

( data sources which currently you do not get to curate, so shut-up and take your result, with a grain of salt. ;)

(also hosted on Amazon or Google, or Azure)

serving much of their 'Vertical Market' Machine learning needed by their Vertical LLMs driving the ongoing tuning of those models , all with the goal of staying competitive, all the time.

CryptoCurrency Piggy Bank by DeepAI.org Cyberpunk GenAI Expert

lame, lame, lame result... ok its Freemium stuff, for plebes like me,

Streamlining Pay Per 'Engineered' Prompt Payment with Crypto, the ERC20 way,

Imo, the path of least resistance with one less conversion step required is to accepted altcoins of the popular variety based on ERC20 where that form can be easily converted or wrapped to preserve that value in utility tokens, which are then fed into the GPUaaS wholesale infrastructure, per LLM expert type.

Now, given these GenAI expert retailers of Tutor or Research Assistant type, as developed by Independent Software Vendors, who really are also Managed Service Providers,

many of which can't afford to pay for the GPUaaS time needed for the many repetitive 'inference tuning' jobs needed to keep their Vertical experts relevant and smarter than their competition,

especially when such GenAI Vertical Expert MSPs try renting GPUaaS time from Amazon with smaller specialized subscriber counts and incomes,

One evaluating the GenAI Vertical Expert market opportunity will ask the Question:

What's a poor GenAI MSP to do?

Cuda KoolAid by DeepAI.org Cyberpunk Gen AI Vertical 'Expert'

Super Weird...

First Mr. GenAI Vertical Expert MSP, MUST get off the Cuda Koolaid:

Use MS Azure AI Copilot as Short Term AI Dev Platform Go to?

CUDA is NVidia's 'intellectual mortgage' on your GenAI Developer brain,

constricting your creativity and ability to get to market fast.

The short term fix?

GenAI Expert ISVs need to shift their development effort away Cuda and Nvidia, and go to MS CoPilot.

Imo, these GenAI Vertical ISVs have have 12 to 18 months, before MS raises their monthly GPUaaS prices to run your CoPilot Vertical Expert Creation.

I hate to say it, but today MS Azure and CoPilot is imo,

the GenAI Vertical Expert ISV's only real option at the moment to forge a path to profitability, fast. (tic toc)

(given OpenAI is not free open source accessible but a black box),

MS Azure AI's 'white label' deal for GPUaaS, based on AMD IP is definitely cheaper for GenAI Vertical Expert ISVs than NVIDIA powered rivals Google and Amazon and, right now MS Azure's GPUaaS performance is available in Volume at a reasonable price, even though MS Azure make the same use of expensive AMD GPU direct attached memory type 'ADM'.

As to how does a budding Vertical Expert GenAI MSP get off the Cuda Koolaid,

well, MS Azure Copilot does have their own version of CoPilot 'Koolaid', which is open source and right now in SDK form free to the developer, which largely caters to MS Tech weenies, SDKs which frankly, really won't interest hard core Linux Cudafile developer types designing GenAI Vertical Expert Services.

The interim payment fix for GPUaaS time to run your Expert will still be 'fiat' for MS Azure Copilot (Via credit cards),

which speaks volumes about the current state of the cryptosphere's ability to NOT be able to step in and provide lower monthly or per command prompt fees for such GenAI Tutor or Research Assistant services, at the moment.

Really after all these years now, why can't I just use my crypto wallet on my smartphone and pay for it with an ERC20 token or coin? Why not? (end of rant)

And which GenAI LLM Development Platform should I use to get off the Nvidia (Made in Taiwan and CN) Cuda Koolaid?

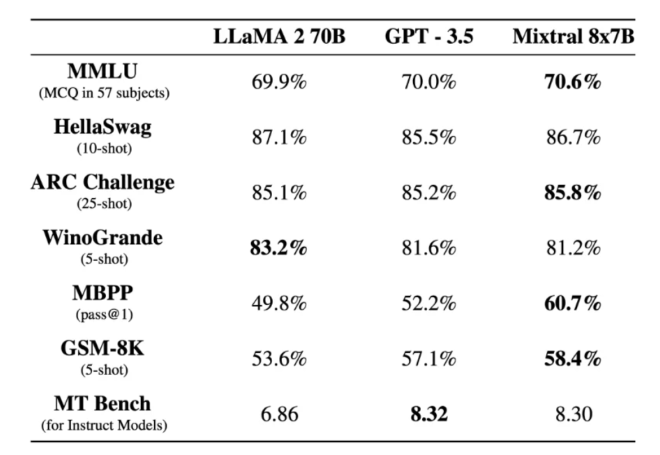

Now some will argue that Mistral's Mixtral might be the right GenAIaaS Expert Agent development platform, largely because of their marginally better AI 'performance' scores (yawn)

Mix of Experts, France and EU government funded Mistral to the Rescue?

However, Imo, despite Mistral of France recognizing the rapid defragmentation of the genAI market reflected in their LLM architecture 'Mixtral mix of agent' modular model, Mistral has a long way to go in creating developer productivity tools needed by the developers looking to create a set of expert LLM subsets, especially when it comes to conveniently speeding development (without a PhD in tow), so Mistral has lots of work to do there, so giddy up. (plus they have France and EU government propaganda 'You owe US' strings attached)

DeepAI.org's result from "Multi-headed Alien Expert" engineered prompt

These could be very French Cylons looking to hunt down Mixtral to improve their looks. ;)

Breaking Nvidia's GPU Supply and GPUaaS Market Dominance:

MS CoPilot+ AMD Now, Intel Later in 2025

Hardly any GenAI Assistant Services running genAIaaS, offered as a set of experts, can get additional supply of Nvidia H100 hardware today, with 4-6 months order fulfillment windows and, there are really no guarantee that they will even take your small order if you don't have the unit volume.

It's certainly hard for minimally financed smaller boutique 'genAI expert' startups to either buy or rent the Nvidia GPU iterative 'expert' inference improvements per model (especially if they are 'skill-locked' into Nvidia's 'Cuda Koolaid' way of programming GPUs).

Running iterative inference tuning jobs, which is basically re-cycling the machine learning against newly accumulated expert relevant' data, which is been recently web crawled(to pick up new data and new events) and then,

auto deposited in a lakehouse data structure

is what keeps their LLM models 'performant' and makes their 'expert' assistants be more useful to end user subscribers(and Freemium tire kickers),

and therefore competitive,

especially if the 'tuning in such narrow domains of expertise' is utilizing accurately and legally curated data sources populating the BIG data structure.

Nvidia's Engineered GPU Shortage Coming to an End

This Nivida engineered for profit 'bottleneck of GPU supply' in either case is not largely because Nvidia cannot keep up to orders,

its because it makes no profitable sense for Nvidia to ramp up supply to deliver any quicker,

especially (until now)when there were no real competitors in play (With Cuda Koolaid Compatible toolsets)

As mentioned earlier in this post,

Nvidia's engineered GPU supply shortages,

which ultimately triggered AMD's GPU go to market play (the CEOs are cousins),

Really had AMD's CEO engineer their 'go to market' path to get what were essentially untapped developers to use their AMD GPUs both branded and MS White-labelled via MS Azure's Massive Market Channel friendly CoPilot offer,

This move by AMD imo opens up even more opportunities for other Hardware GPU vendor Intel who missed the Nvidia GPU first wave gold rush to proactively engineer low cost Intel GPUs designed to have smaller 80% smaller VERY expensive ADM memory footprints which make use of dynamically shared CXL coherent Memories on DDR5/4(Memverge, et al thankyou RAMBUS inventor of CXL) with lower GPUaaS costs.

Such new Intel GPU composable cxl2.0 designs running on pciegen5 motherboards I expect to show up within 1 year.

These new GPUaaS designs, while not yet available until 2005, will make use of shared memory through CXL.io .mem and cxl.cache protocols so these same GenAI driven GPUaaS wholesale services will be provided as lower cost compute services making use of much cheaper shared memory, service delivered from all sizes of Colo Facilities like CoLogix et al, to quickly sate the demand of what are vertical market GenAI Assistant Service retailers emerging out of the MS tech weenie developer communities running with CoPilot.

Intel imo,

will lead the way not because they have any choice,

it's more Intel needs to out manoeuvre AMD to hold on to their Dell and HPE business and,

Intel needs to get 'Modular' with both GPU compute and memory to pull that off,

and Intel have just the capability to Create cxlGPUs which make use of cxl shared memory, given they have in their arsenal RAMbus the inventor of CXL ComputerExpressLink and,

having recently vanquished other competing Forums,

thanks largely to the innovations made by the 'alien like' tech from RAMBUS, the inventors of the CXL.

"Garbage In, Garbage Out" DeepAI.org CyberPunk Expert Result

The next wave of the GPUaaS Market growth is there for Intel, HPE and Dell to grab, if they go cxlGPU.Astera

Astera Labs is the keystone component needed to make cxlGPUs happen for intel

Watch the animation on this page to understand how that works, with CXL 2.0, their onboard CXL3.0 Fabric Manager speaking to Intel 13thgen processors with PCIegen5 and CXL lanes hardwired into the processor to gain access to multiple x8 lanes on the bus.

https://www.asteralabs.com/products/leo/leo-cxl-memory-connectivity-controllers/

Avoiding GIGO- Garbage In, Garbage Out :

Expert Data Curation, Properly User Permissioned, without Copyright Violation

These same 'GPU as a service' expert offers, ie - those buying GPUaaS from Amazon are finding these hyperscaler GPU services are booked 3-4 months in advance and the monthly buy price makes no sense for those boutique plays with only 10,000 subscribers in a vertical market, in a particular language. (sprechen sie 'Hungarian Travel Agent Expert?')

More importantly, where did the new data used to trigger an iterative tuning of the LLM come from?

Was it legally acquired? (web crawled in many cases)

and,

has the new data been permissioned by the end user subscriber's White List picks?

Seriously,

I have yet to find a GenAI service offer which has support subscriber permissioned white lists controlling the sourcing of data used by the machine learning elements used to update the LLM.

Permissioned white lists for screening ads shown in browsers for example, l

ike those promoted by the Brave Browser project,

have been around along time and in-fact the EU made non-permissioned advertising illegal as of March 31st 2022.

The EU is also looking to ad the same laws in place to regulate genAI use,

although there are built-in violations of "PGP" Pretty Good Privacy slipped in along the way by the EU and many other governments, so 'PGP' mileage will vary, per geo. govt.

DeepAI.org's "Dynamically Shared Memory for GPU Networks" Enhanced Result -duh?

CXL and Utility Tokens for GenAI and GPUaaS:

A Match Made in Heaven? Sort of..

Less ADM Memory in next gen cxlGPUs making more use of dynamically shared cxlDDR5/4 Memory

Top of Rack Shared Memory and Shared Storage for GPUaaS

CXL virtualizes Memory as both directly coherent Memory as CXL enabled system memory just dynamically appears as part of direct attached memory(RAM) connected via the cxl.io protocol as either cxl.mem or cxl.cache protocols, albeit slower RAM is used by the GPU hosted LLM program operating in the GPU under control of the main GPU0, the master, serving up logically assigned regions of DDR5 or DDR4 from cards offered by such vendors as RAMBUS, Memverge, et al for use by different secondary GPUs<1-n>

The CXL hitch today is that cxl.io protocol cxl 2.0 is implemented on Intel XEON 13 generation 64bit IA64bit SoCs .

Will Intel offer the same capability soon on any forthcoming Intel GPU offering in the near future?

IMNSHO Intel would be crazy, if not brain dead, not to meld CXL into their next gen GPU offer.

DC Operator GPUaaS IT Infrastructure Chart by DeepAI.org Cyberpunk Expert

The NextGen Intel GPU offer?:

CXL Integrated into DC Operator GPUaaS Infrastructures?

That means such a hypothetical Intel cxl enabled GPU package either needs to wire in CXL 2.0 over pciegen5, or CXL 3.0 over pciegen6 directly into their Intel GPU SoC package (CXL 3.0 on pcieGen6.0 is just around the corner, the verification tools from Synapsys have been around for over one year),

which needed to Intel offered to both Dell and HPE as IP Custom SoC Configurations, in order to keep AMD from grabbing away their future GPU market opportunity in the same way they grabbed the MS Azure AI CoPilot business.

Intel really would have needed to act I year ago in order to get the GPU designed with cxl to access what is coherent memory, a dynamic virtualized sharing 'master to slave' use of the , much cheaper cxl shared memory shelf of housing DDR4/5 (Memverge, Rambus et al) connected via the cxl.io protocol.

Intel will , if they acted then be doing cxl on chip integration work now, adapting what they have done to the XEON processors to what is essentially a RISC64Bit GPU design package, this time with less, really expensive ADM memory directly attached to the GPU.

In this Intel GPUcxl scenario, Ethernet goes away in the GPUaaS DC infrastructure, replaced by pciegen5 bus and cxl.io protocol running over top to feed into the hardware addressed TLP Transaction Layer 'door bell ring' Protocol of the bus.

Meaning, all GPU master to DDR5/4 Slave io using CXL.io is running over cheap pciegen5 'connected motherboards' via cheap pciegen5 bus extenders equipped with re-timers (to re-condition signals received over fiber 100 meters in length in the Giant DCs), where such cxl connections can travel over 'bonded' dual x8 or x16 lanes interconnected via cheap cxl/gen5 switches.

While it all sounds to complicated and futuristic , the fact is,

Intel is shipping Intel XEON 13th generation processors to Dell and HPE in volume right now in 2024,

all of which will be arriving in systems the big DC operators are installing in their facilities later this year.

The bigger task will be how does the DC Operator manage such dynamically shared CXL composable Cxl2.0 gen5pcie infrastructure in GPUaaS service form and,

also manage what is the dynamic logging and monitoring of both GPU and shared DDr5/4 memory prompt jobs across

what are HA "High Availability" control, monitoring and logging data flows

passing across multiple low bandwidth x1 lanes over the gen5 (soon to be in one year pciegen6) fabric?

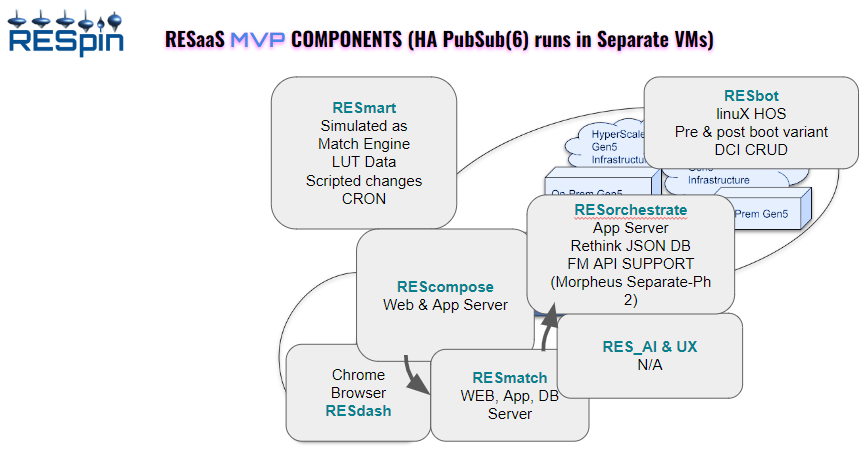

What I am working on... to 'orchestrate' cheaper AIgen Assistant Services "For the People' , maybe it even enables GenAI + LLM use of cheap CPUS and shared RAM... backed up by super cheap distributed (store the facts once, read them many times) storage from Autonomi

Early CXL 2.0/3.0/3.1 Startup Dynamic Resource Manager :

CXL GPUaaS Shared Memory Use Capable

Copyright 2024 RESpin Technologies All Rights Reserved.

'Carwash' by DeepAI.org Cyberpunk Vertical GenAI Expert "Close but no Cigar"

RESPIN RESMAT:

Tokenizing End User Self-service use of CXL virtualized Expert LLM Resources:

Shared Composable Data Centers:

The key to MSP profitable US $6.99/Month GenAI Expert Fees?

Where CXL tokenization comes into play is having the CXL Fabric Manager Agent

control the GenAI Expert Assistant setup and access to LLM Expert shared memory cxl DDR5/DDR4 cheaper coherent RAM Resources. (The LLM uses the additional memory attached to the GPU hosting the LLM like it was local(coherent), no programming changes required)

The good news is, the CXL FM agent can live anywhere.

The CXL FM agent code, in practice though, will likely get booted from each gen5pcie connected and involved system's BMC, Board Management Controller.

RESpin calls their CXL FM capable agent RESbot, which does much more than handle virtual addressing mapping to underlying physical addresses to resources can be shared.

RESbot additionally takes over empty compute, network and storage systems configured by the DC Operator to remote boot from a PXE server, and ones loaded by the system the RESbot is operating in RAM only and is designed to prep the system, per the DC Operator's Primary MSP rental manifest,

RESbots are capable of handling multiple MSP manifests, to allow 'cohabitate' use of the same RESbot managed resource by Multiple MSPs,

where the order of which MSP gets to use the resource first is controlled by the underlying DC Operator's rule set,

essentially The DC Operator's wholesale service offer to the several MSPs wanting rent out and resell the DC Operator's Data Centre Infrastructure to support their various service offers to end user subscribers.

GPUaaS offered Wholesale to the MSPS is therefore going to be priced lower by the DC Operator,

when they can sign-up more MSPs to make 'co-habitive' use of their Infrastructure more frequently,

so as to reduced DC Operator Idle time 2X or more, and also provide the DC Operator with a 2X better ROI.

The RESpin RESbot also WON'T do any other load the workload job, and monitor/log the workload job progress,

unless the DC Operator's RESpin Orchestrator sends the RESbot agent crypto utility tokens to do work,

per the MSP submitted manifest, so the Master RESbot has a wallet of it's own to handle that part of the dynamic, pay for use operation.

RESpin therefore,

is using some form of Blockchain with Interchain Support, likely #Cosmos, #Chainlink or similar, to make it easy for both MSPs and their end users to pay cryptocurrency for workload jobs with low fee overhead, much lower than credit card fees.

In standard terms, the cxl FM agent simply maps cxl.io (the cxl transport protocol riding over top of the pcie TLP transport Protocol Layer) interfaces to virtual Logical Device addresses of #CXL setup to represent the underlying pciegen5 system addresses.

All cxl 'Logical Device' addresses plugged into the same interconnected set of pciegen5 system motherboards are learned over top of PCIegen5 or PCIegen6 bus addresses by the cxl FM agent at boot time.

Such addresses get broadcast in a multi-cast manner to all other cxl listening devices plugged into the PCIe fabric(motherboard), behaving a lot like #AppleTalk 2.0 actually.

Just plug the system in boot it in and each pcie device in the system running an FM will have the upper mapped cxl logical device auto learn all the other cxl addresses plugged into the same 'pciegen5' (motherboard or extended motherboard)network and it all just works, no TCP/IP expertise required.

RESpin Technologies is one of those early CXL enabled ISVs focused on turning any DC of any size into a Composable set of resources, accessed by token paying self-serviced users and MSPs.

The #RESpin RESaaS "Resource as a Service' Platform for DC operators running colocation facilities is designed to be capable of being configured via any browser to create a #RESmat "RESource Automat"(functioning like a token operated Car Wash 'Automat' or Laundromat) to enable end user self-service 'job submission'.

The subscriber to GENAI Experts hosted on RESpin, by sending ERC20 crypto tokens with the their 'Engineered Prompt' has such job tokens forwarded by the RESmat's Orchestrator element via the subscriber's MSP service domain to the Master ResBot "Resource Bot software agent' the MSP has rented long term from the DC Operator.

'Resource Bot Software Agent' by GAB.AI SteamPunk Image Generator engineered prompt result- iPad included

That Master RESbot then deposits the utility tokens in the MSP's rented RESbot wallet, which then sends the 'engineered prompt' job to the correct preloaded GPU0 hosted LLM Multi-Expert instance (a front end) and then based on the calculated resources required and the actual Vertical Expert LLM needed,

sends notices to secondary RESbot agents,

which are DC-Operator pre-mounted

(and multi-tenant manifest and job queuing capable)

operating in GPU Metal systems.

These secondary or 'slave' RESbot agents then publish their cxl addressed resources (compute cores/thread 'virtual core-lets' and related cycle speed, Memory, storage capacities and bandwidth lane and speed) availabilities to the Master LLM controller,

(per their subscribed master RESbot SLA portion for the Subscriber:MSP Job)

now hosted on the MSP's GPU0.

The Main Multi-LLM Expert Front End hosted on GPU0 then distributes parts of the Vertical LLM expert required by the job to GPUs<1 to n> by

instructing the assigned RESbot agent to be reading such LLM experts from either shared cxl/cache memory

(more expensive service rented from the DC Operator)

or

triggering another adjacently assigned RESbot agent managing NVME SSD

(Lower cost service, with a bit more wait time) .

That SSD NVME Storage assigned RESbot agent then reads and then writes the LLM expert image from a MSP long term rented block storage volume (which is cheap)

into shared cxl.cache memory (over the cxl lane speed subscribed to by the MSP long term).

Next, the GPU0 LLM main controller distributes parts of that end user subscriber selected expert LLM to other 'rented just for that job' GPUs<1 to n>,

where <n> is the total number of GPUs employed (as calculated by the RESpin Orchestrator',

and cxl_shelf_cache_mem<0-n> represents the RESpin Orchestrator calculated amount of shared cxl.cache memory deployed and cxl_gpu_shared_mem matches each rented GPU additional coherent memory needed to run their part of the job.

In both memory resource cases required to execute the job quickly and economically, the affected secondary RESbots publishing of availability resource state is collected by the RESaaS Platform Match Engine, where RESpin's own RESaaS AI helps the Orchestrator determine which 'engineered prompt' job goes first based on MSP and Subscriber SLAs recorded in the DC Operator's JSON primary manifest and the Match Engine's hard deterministic presentation of resource availability.

And that my friend's is likely how GenAI MSPs are going to be able to rent their Vertical Expert GenAI Assistant services

at US $6.99/month I might add, with 50% plus gross margins

Provided the DC Operator has RESpin RESaaS employed to create a rentable 'per p;rompt' or per month, cxl enabled GPUaaS Infrastructure

'Carwash' SteamPunked by DeepAI_org Stemapunk Expert, "Not Bad Wall Art"

CXL FM agents 'CarWash' Tokenized by RESpin have their Own utility Token Wallets

The CXL Fabric manager embedded in each RESbot agent is equipped with its own utility token wallet and the additional system and containerized application CRUD, monitoring and logging code engineered needed, to only do work, complete tasks, like map virtual cxl logical addresses and broadcast them to others, IF paid some utility tokens to do such work.

The same is true for having extensions to such RESbot agents built to also handle pre-boot configuration of the hardware's operating system based on some manifest sent to the FM extended agent, where the FM agent only triggers such work if paid the correct amount of tokens determined by the DC Operator of the GPUaaS infrastructure.

This means the MSPs GPUaaS front end "GPU0" renting GPUaaS Infrastructure must serve their own end users with the Multi-Expert information they need by having the RESaaS Platform calculate, Browser display and have end user Browser or Hardware wallets issue ERC20 accepted tokens to trigger the execution of their 'engineered prompt' jobs they are submitting through their typically Browser hosted GenAI Assistant App.

Pay Per 'Prompt Complexity'- Determines Price per Prompt Job

Based on the complexity of the engineered prompt, the DC Operator programmed RESmat engine configured will be asking the subscriber for more or les tokens, that is, there will be a different token count created for the end users 'engineered' prompt b the RESaaS Orchestrator Element configured in the DC Operator's RESmat, which itself has its own ERC20 Wallet (as configured by the DC Operator which is instantiated into a private wallet instance per MSP)

The unique token associations will determine the prompt job complexity, which will result in the 'Orchestrator + AI' pair determining the number of GPUs, amount of RAM required and time spent to actually generate a result for the engineered prompt within the limits of current resource supply provided by the Match Engine (which only show relevant resources per the MSP and subscriber rental scope documented in the DC Operators Primary Manifest)

The 'cost per prompt job' is determined by the time spent utilizing <n> number of GPUs and related Shared cxl.cache_RAM and cxl.mem_Coherent_RAM(Which appears dynamically as local useable RAM to the GPU for just that master GPU0 assigned Vertical GenAI Expert job), where the 'engineered prompt job" cost is directly calculated for the MSP and their end user subscriber based on the rental costs of the GPUaaS charged to the MSP by the DC Operator.

The cost per engineered prompt job therefore, has the MSP create a custom price offer per prompt per user 'set of guidelines', where the end user prompt price promoted per job, is largely determined by how cost effectively the DC operator engineers the price/performance capabilities of their GPU infrastructure and by what margin the operator tkes per GPUaaS second, which imo will always be 50% less costly for the MSP with CXL made part of the DC Operator's GPUaaS.

So it really means GenAI Vertical Expert Startups need to shop wisely in the future for GPUaaS offers, as all DC Operator infrastructures offering GPUaaS are not created equal. Those employing cxl enabled infrastructure.

'Apple Pie Slice on Plate' Fairy Tale Expert DeepAI_org ' Nice Mismatch of Prompt vs Expert' lol ;)

CXL enabled Addressable Market Size Enlargement for GPUaaS DC Operators and GenAI Service Startups

Picking your Vertical Market Matters: So Does Matching your Prompt to the Right Vertical Expert

Getting to Composable GPUaaS with CXL will be important to support the Verticalization Boom coming for GenAI. Intel and also AMD will likely bust up the Nvidia Monopoly by 2025, provided they work closely with Dell and HPE to give them the GPU, Network, and Storage CXL composable systems they will need to break into that market in a big way to take share away from Nvidia and the Cuda Koolaid Army of AI and ML Developers.

The GPUaaS Orchestrator engine capability selected by the DC Operator in the near future, likely 2025, will be the key part which determines the size of their 'reachable' addressable subscriber market.

Since the Orchestrator will need to be adept at receiving ERC20 tokens for job payment, and early GenAI Expert heavy users are technical people with a need for Tutor or Research Assistant quality advice, then it makes sense for the DC Operator to select a crypto ready solution which can accept ERC20 tokens which can be either converted or wrapped and then sent as utility tokens together with the prompt job manifest to a CXL enabled FM extended agent able to compose and control the provisioning the resources dynamically in the correct economical portions needed to execute correct LLM expert instances subscribed to by the MSP's end user, to keep the price per prompt attractive to the greater part of the market, the average person able to afford US$6.99/Month for the Vertical GenAI Expert Assistant App, loaded to their Desktop and their Smartphone.

CXL will enable GPUaaS DC Operators to double their ROI, provided they can engineer a Multi-Tenant MSP model which can efficiently process many subscriber 'engineered prompt" jobs dynamically and efficiently.

CXL Enabled GenAI Vertical Expert Services can be crafted in such away to be available 'For the People', at a Fair Price

While the tools needed by GenAI MSPs and ISVs need to also be offered at affordable price, frankly we developers from the cybersphere are not there yet, so 2025 will likely be the year we see ERC20 tokenized GPUaaS Services start to pop up which employ some form of dynamical composable resource sharing in the DC enabled by CXL to service GenAI Per Prompt Pricing that the average subscriber can afford.

When then happens, GenAI 'For the People' at a reasonable monthly price, or price per prompt will start to emerge and imo become the dominant way in which we will all consume GenAI Expert Assistant Advice at a fair price, with full support for end user White Listed curation of machine learning sources keeping keeping AI on a LEASH and under the control of "We the People".

So like 'em or lump 'em, Intel does have a role to play here in the next phase GPUaaS market expansion and the explosion of GenAI Vertical Expert Services now in play,

The only question remaining is how will Cryptosphere impact both markets in the coming year?

Full Disclosure, I am working with a veteran team which is looking to 'Respin' the whole GPUaaS market segment space with pay per prompt profitable pricing for both the MSP and DC Operator being the name of our game,

so 'We the People' take control of AI, at a Fair Price 'per Prompt', paid for using ERC20 cryptos

(Leashing AI Via GenAI 'sources used' White Lists operating in any Browser Mobile or Desktop)

TK over and out

PS- We do need Intel to Step up with cxl enabled GPUs, sooner rather than later.