In this post I want to talk about a scenario that is frightening, yet very likely to occur.

AI is a fascinating topic that has made it's way into movies and public discussion long ago.

It's a topic that is kind of cool to most of us, unlike most other dangers to the human species, thinking about death by science-fiction is fun to think about.

Although the constant gains made in AI are likely to destroy humanity as we know it one day.

Developing a proper emotional response to the threats of artificial intelligence seems rather hard, for me at least.

There really is only one way to stop an AI from emerging that is more powerful in ways we can hardly conceive, and that would be to stop making any technological progress now.

For this to happen we would have to suffer a major environmental catastrophe, a global pandemic or a nuclear war as only options to stop constant progress being made in AI. If that is not to happen, we are inevitably working towards it.

Given how valuable technology is to our lives, we will continue to evolve it. No matter how big the steps are that we take, ultimately we will get there.

There will be a point where machines are built that are smarter than we are and at that point they will start improving themselves, a point in history that mathematician I.J Good described as "Intelligence Explosion"

Let an ultraintelligent machine be defined as a machine that can far surpass all the intellectual activities of any man however clever. Since the design of machines is one of these intellectual activities, an ultraintelligent machine could design even better machines; there would then unquestionably be an "intelligence explosion", and the intelligence of man would be left far behind. Thus the first ultraintelligent machine is the last invention that man need ever make.

The concern here is that machines will not turn malevolent at some point, instead they will become much more competent than us, to the point where the slightest divergence between their goals and ours could bring upon our downfall. Just as we do not want to harm ants when we walk on the sidewalk, as soon as they start interfering with our goal of having a clean living room, we exterminate them without second thought. Whether these machines will become conscious or not, there might very well be the possibility that they will treat us with similar disregard.

This might seem far fetched to many. The inevitability of creating super intelligent AI is questionable to many people at best. Contrary to that, there are very valid concerns that we are steadily progressing towards it

- Intelligence is the product of information processing

Machines with narrow intelligence perform specific tasks already at efficiency that surpasses human capabilities by far. The ability to think across the board for humans is the result of processing information at far greater efficiency than animals. - We will continue improving our intelligent machines

Intelligence is undoubtedly our most valuable resource. We are dependent on it to solve crucial problems we did not manage to solve yet. We want to cure cancer, understand our political, societal and economical systems much better. So there is just too much value in constant improvement to not follow through. The rate of progress is not relevant here, eventually we will create machines that are able to think across many domains. - Humans are by far not the peak of intelligence

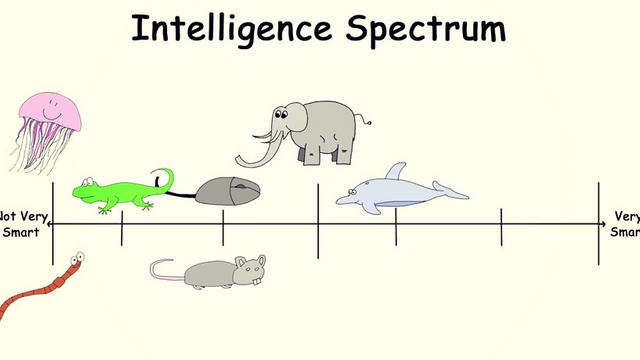

This is what's making this situation so precarious and our ability to asses the risks of AI so limited.

The spectrum exceeds this simplistic image probably by far. If we are building machines that are more intelligent than we are, they are going to explore and exceed this spectrum to heights we can not imagine.

Let's say we are only building an AI that is just as smart as you and me. Electronic circuits function about a million times faster than bio-chemical ones. The machine would think a million times faster than the minds that built it. Given that, if it was to run for a single week, it would perform 20000 years of human intellectual work. This is where humans will lose the ability to understand, much less constrain a mind achieving progress at this pace. Even if we were to create this intelligence right for the first time, the implications would be devastating.

The machine could think of ways that make human employment unnecessary across many domains, whilst only the rich are profiting. It would create a level of wealth inequality humanity has never faced before. It could design, produce and utilize machines that make human labor obsolete that are powered by sunlight, only for the cost of raw materials. But the common man is likely not to see the fruits of that.

A machine that capable, would also be able to wage war at a level of efficiency that would force other nations to start a war as preemptive strike. The first nation to build an AI that intelligent would gain global dominance in all domains. A week head start would suffice to ensure it, leaving other nations with little options but to declare war since they have no idea what an AI would do to achieve it's predefined goals. It might well be their extinction.

The question arises, under what conditions are we able to progress in AI in a safe manner?

Usually technologies are invented first, safeguarding measures are implemented later.

First the car was invented, speed limits and age restrictions came later.

This way of doing things is not applicable to the development of AI obviously.

All this makes it seem inevitable we are on our way to building an omnipotent god that we can not control, nor understand what actions it will take.

It seems that we only get one shot to get it right, how that is achieved is something we need to start discussing. There is real urgency here. We are entering uncharted waters and so far, I did not hear a convincing idea how to go about it.

Eager to hear your thoughts and opinions! Let's discuss!

Disclaimer: This is a repost of my original work.